Analyzing Growing Plants from 4D Point Cloud Data

We introduce a framework to analyse time-lapse 3D scan of growing plants, particularly focusing on accurate localization and tracking topological events like budding and bifurcation. We evaluate our approach on multiple data sets and use the results to animate static virtual plants or directly attach them to physical simulators.

Yangyan Li, Shenzhen Institute of Advanced Technology

Xiaochen Fan, Shenzhen Institute of Advanced Technology

Niloy J. Mitra, University College London

Daniel A. Chamovitz, Tel Aviv University

Daniel Cohen-Or, Tel Aviv University

Baoquan Chen, Shenzhen Institute of Advanced Technology and Shandong University

Reconstructing Detailed Dynamic Face Geometry from Monocular Video

We present a method for capturing face geometry from monocular video. It works under uncontrolled lighting and successfully reconstructs expressive motion including high-frequency face detail. After simple manual initialization, the capturing process is fully automatic. We demonstrate performance capture results for long and complex sequences captured indoors and outdoors.

Pablo Garrido, Max Planck Institute for Informatics

Levi Valgaerts, Max Planck Institute for Informatics

Chenglei Wu, Max Planck Institute for Informatics

Christian Theobalt, Max Planck Institute for Informatics

Inverse Dynamic Hair Modeling with Frictional Contact

We present a method for converting a hair geometry into a physics-based hair model, such that the static hair pose, in the presence of gravity and frictional contact, accurately matches the input geometry. Our method was used to animate both artistic hairstyles and recent hair data reconstructed from capture.

Alexandre Derouet-Jourdan, INRIA and CNRS

Florence Bertails-Descoubes, INRIA and CNRS

Gilles Daviet, INRIA and CNRS

Joelle Thollot, INRIA and CNRS

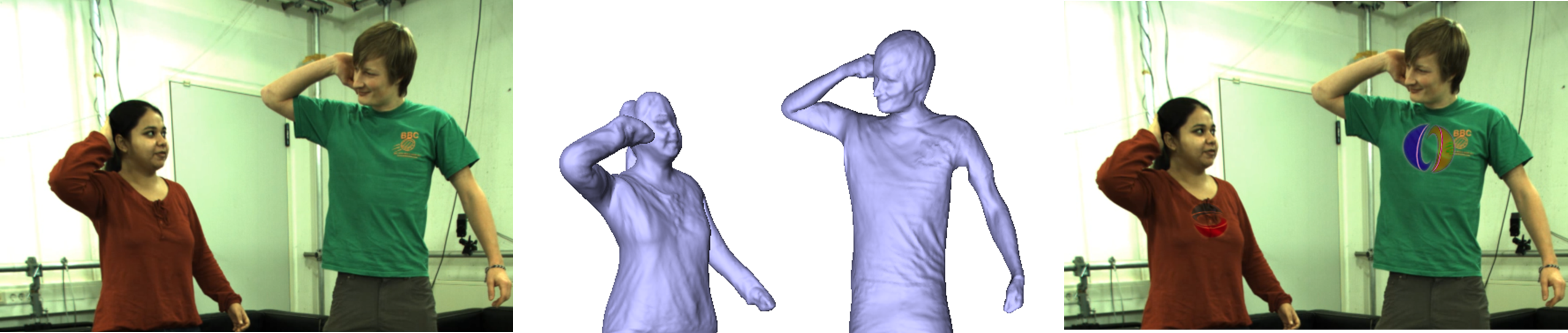

On-Set Performance Capture of Multiple Actors With A Stereo Camera

We describe a new method which is able to track skeletal motion and detailed surface geometry of one ore more actors from footage recorded with a stereo rig that is allowed to move. It succeeds in general sets with uncontrolled background and uncontrolled illumination.

Chenglei Wu, Max Planck Institut Informatik

Carsten Stoll, Max Planck Institut Informatik

Levi Valgaerts, Max Planck Institut Informatik

Christian Theobalt, Max Planck Institut Informatik